TensorFlow LSTM/GRU重置状态每个纪元一次,而不是每个新批次纪元、而不是、状态、TensorFlow

2023-09-03 08:40:23

作者:社会套路深,谁拿谁当真

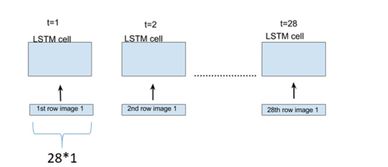

我基于GRU训练以下模型,请注意,我将参数stateful=True传递给GRU构建器。

class LearningToSurpriseModel(tf.keras.Model):

def __init__(self, vocab_size, embedding_dim, rnn_units):

super().__init__(self)

self.embedding = tf.keras.layers.Embedding(vocab_size, embedding_dim)

self.gru = tf.keras.layers.GRU(rnn_units,

stateful=True,

return_sequences=True,

return_state=True,

reset_after=True

)

self.dense = tf.keras.layers.Dense(vocab_size)

def call(self, inputs, states=None, return_state=False, training=False):

x = inputs

x = self.embedding(x, training=training)

if states is None:

states = self.gru.get_initial_state(x)

x, states = self.gru(x, initial_state=states, training=training)

x = self.dense(x, training=training)

if return_state:

return x, states

else:

return x

@tf.function

def train_step(self, inputs):

[defining here my training step]

我实例化我的模型

model = LearningToSurpriseModel(

vocab_size=len(ids_from_chars.get_vocabulary()),

embedding_dim=embedding_dim,

rnn_units=rnn_units

)

[编译并做事情]

和EPOCHS纪元

for i in range(EPOCHS):

model.fit(train_dataset, validation_data=validation_dataset, epochs=1, callbacks = [EarlyS], verbose=1)

model.reset_states()

编辑

TensorFlow为Models实现reset_states函数

def reset_states(self):

for layer in self.layers:

if hasattr(layer, 'reset_states') and getattr(layer, 'stateful', False):

layer.reset_states()

stateful=False的情况下才能重置状态?这是我从getattr(layer, 'stateful', False)上的条件推断的。

推荐答案

您可以尝试重置自定义Callback中的状态:

model = LearningToSurpriseModel(

vocab_size=len(ids_from_chars.get_vocabulary()),

embedding_dim=embedding_dim,

rnn_units=rnn_units

)

gru_layer = model.layers[1]

class CustomCallback(tf.keras.callbacks.Callback):

def __init__(self, gru_layer):

self.gru_layer = gru_layer

def on_epoch_end(self, epoch, logs=None):

self.gru_layer.reset_states()

model.fit(train_dataset, validation_data=validation_dataset, epochs=1, callbacks = [EarlyS, CustomCallback(gru_layer)], verbose=1)

另请参阅post有关何时重置状态的信息。

相关推荐

精彩图集